We live in an era that devalues conformity, while simultaneously preserving it in many interesting ways. Everyone is allowed to have an opinion. Divergent views produce conflict, however, and disagreement, argument, and debate define our current moment.

If we merely disagreed on matters of taste - our favorite color, music, movies, etc. - we could avoid such conflicts. Increasingly, though, we disagree on more fundamental ideas. Some deny the spherical shape of the Earth and the heliocentric model of the solar system (I highly recommend Behind the Curve, a movie about this movement). Arguments of all shapes and sizes spring up everywhere: capitalism vs. socialism, humanity’s role in climate change, on and on.

The democratization of virality amplifies these disagreements. Previously obscure ideas can quickly become widely known. Competing ideological camps endlessly try to score points on one another. The internet rewards this behavior with fame and other social capital. Various forms of what I’ll call “intellectual denial of service” act to reinforce this dynamic. I’ll describe one of these attack vectors in this post.

Bad infinitum #

Say that you stumble upon an idea, X, that contradicts widespread consensus views. X explains something you previously didn’t understand or doubted, in a way that now makes perfect sense. The consensus believers have their own idea, Y. They may have degrees in a relevant field, popular best-selling books, or any number of other indicators of social cachet and expertise.

You take your idea, and you present it to one or more of them as a challenge: “here is why you’re wrong about Y.” They’re likely to respond indignantly, as you’ve just attacked their competence and expertise, perhaps even their livelihoods. Sadly, defensiveness rarely produces the best arguments.

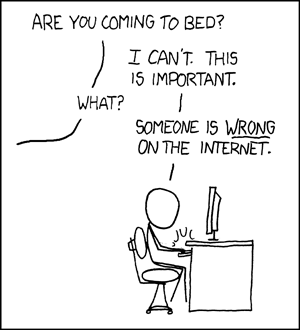

They might quote-tweet you with a snarky comment: “get a load of this rube.” Or maybe they simply ignore you. If you’re lucky, they will attempt to engage with you and present evidence for their beliefs that contradicts your premises. This can go back and forth for a while, but it seldom ends with someone changing their mind.

Once we have publicly attached our name to an idea, the path of least resistance is to continue believing it. We love to preserve self-consistency and hate to admit when we’re wrong. [Insert obligatory list of cognitive biases like confirmation bias, the Dunning-Kruger effect, the availability heuristic, etc.]

Now you each go your separate ways, likely more convinced of each of your positions than before. Triumphant, you post a tweet about your idea and go to bed. When you wake up, you see that others have found your idea compelling. They, too, have decided to confront the other side with X and evidence supporting it. The X crowd inundates the Y crowd with more demands for evidence and challenges of their expertise.

The Y’s may start off by responding politely to each challenger, but they will run out of energy at some point. They’ll say “I’m done talking about X,” or merely shut down and stop responding. Tired of treading over the same ground repeatedly, they simply give up in exhaustion.

This is the ultimate coup! The opposing army has thrown down its arms and the castle is undefended! The conversation becomes more and more one-sided. Lots of proponents of idea X shouting on one side, annoyed silence or open hostility on the Y side.

The bad infinitum cycle has started. As experts in the Y camp become increasingly defensive and hostile, the X camp gains prominence through attrition. Non-experts deride experts as weak, corrupt, or misguided. A feedback loop forms: pro-X people attack the experts, who eventually get exhausted and give up. The pro-X people present this as further evidence for X. More people flock to X based on this supposed victory, and so on. New X proponents rehash the same arguments over and over again, frustrating and bogging down the Y’s. Returning to harmony requires breaking this feedback loop.

Asymmetric warfare #

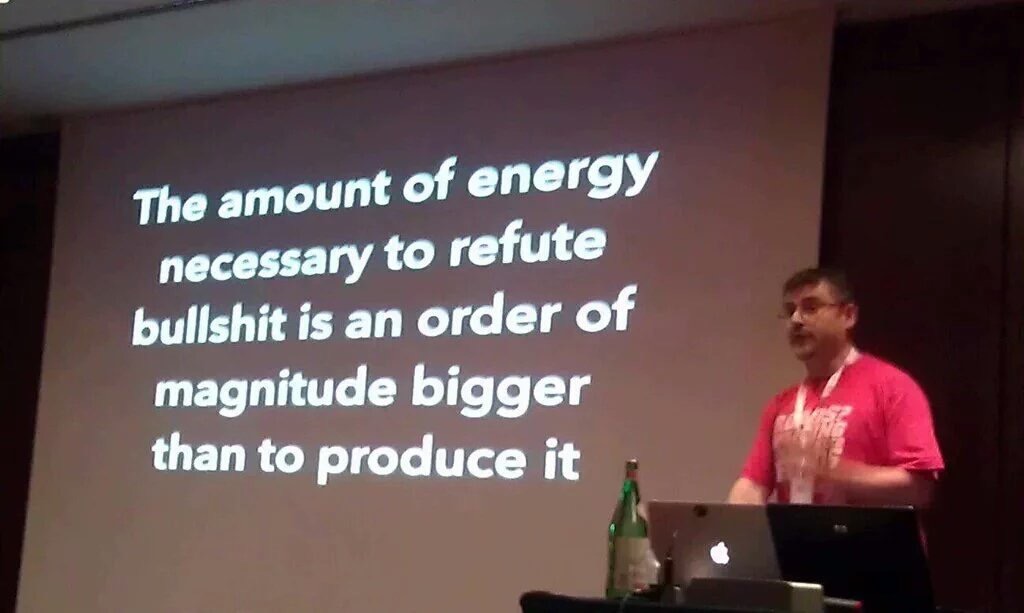

This dynamic contains an important asymmetry. Far more people can grok simple, intuitive (but wrong) ideas than can grasp nuanced and complex ones in any given field. Something like a Pareto distribution can form, with maybe 20% of people having 80% of the understanding and expertise. Further, if you believe in the Dunning-Kruger effect, experts become increasingly unsure of their expertise as they gain more of it, weakening their defenses.

Quickly, the 80% can overwhelm the 20% with demands for explanations and evidence. Such demands require little effort, while placing a large burden on the other side to carefully craft a counter-argument and assemble available data. Once assembled, counter-arguments may be misinterpreted or simply ignored (remember, this is a conversation between experts and non-experts), representing wasted effort on the part of the expert.

Every minute spent refuting X takes away energy that could be spent refining Y. Experts, rejecting this bargain, concede the commons to non-experts and go back to their own insulated communities. The X crowd cheers with its victory and Veritas, the goddess of truth, weeps.

Anyone familiar with internet denial of service attacks may recognize some similarities here: as unproductive traffic overwhelms available bandwidth, productive requests time out. Wasting intellectual horsepower by refuting bad arguments, experts have less and less time for more productive endeavors.

Bad infinitum is but one form of this attack, and I will cover others in future posts. I hope you’ll bear with me, as I’m likely to spawn even more neologisms in the process :)